Data buzz is as high as ever and with all the applications being built on top of the data. The question on how well can I pull the different data streams together to serve my purpose.

There are some steps on how to design a data pipeline manually or using some of the great SAAS products that have made it easy. But first, we start from the beginning. Knowing how to build a data pipeline is one of the first steps to building an efficient data-informed product.

What is Data Pipeline?

The sequence of steps helps the collection, transformation, and storage of data to help achieve a purpose. With any organization or data project, we can consider data pipelines as the backbone.

I have tried to look at data pipelines as generic as possible – not all pipelines need automation or continuous flow of data from the source to storage.

Building Blocks

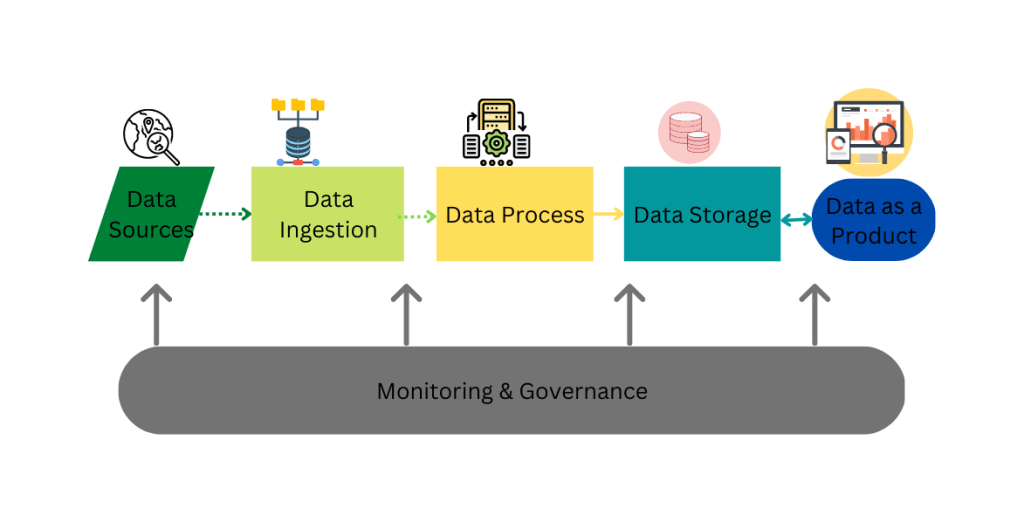

There are core components to every data pipeline.

Using the graphical representation of a data pipeline below, let’s have a bird-eye view of the unique pieces that build a data pipeline.

To create a data pipeline, you pull it from a source. These could be from your application, 3rd party data, or both. How do you intend to ingest that data(Batch or real-time data streaming?)

The next step is processing. Whenever the data pipeline or analytics discussion comes up, one flow is ETL – Extract, Transform & Load. Depending on your system design and perf level you are trying to achieve. Another way is ELT(L) – some developers have found this to be faster and more efficient.

Another reason is not to have extra checks if for any reason there is a break during E->T step or reloading that step all over.

Data storage is important, and this comes with ensuring proper database design to handle your data pipeline. One trick I have found useful is designing the data store as a “dev-qa-staging-prod” environment.

Once all the quality checks, data dictionary definitions, and cataloging are done. You can say I have a data product ready for consumption, such as analytics, ML, AI, A/B testing, etc.

Looking to understand ETL basics. Refer to a hands-on post I made earlier.

Design a Data Pipeline Azure Data Factory

Steps to create a simple data pipeline:

- Source Data: This can be stored in Azure Data Lake or Azure Blob Storage.

- Data Ingestion: Ingest data using the COPY activity in Azure Data Factory.

- Data Transformation: Still using a Data Transformation Activity within the Azure Data Factory. It can apply different transformations, such as sorting, filtering and aggregations.

- Load Data: Azure Data Factory can load the transformed data into your Azure storage account(Cosmos DB, Data Warehouse or Data Lake Store)

- Monitor Data Pipeline: Track the status of activities and detect any errors or failures by automating the data pipeline using the Schedule Activity. We can use this on demand or on a set schedule.

What other tools or techniques have you used? Share in the comments 🙂

Some other cool things I have been up to 🙂

Checkout my YouTube as I have a new STEM-animation series coming out and TYTtechED for fun STEM books for all ages.